DeepMill: Neural Accessibility Learning for Subtractive Manufacturing

ACM SIGGRAPH 2025

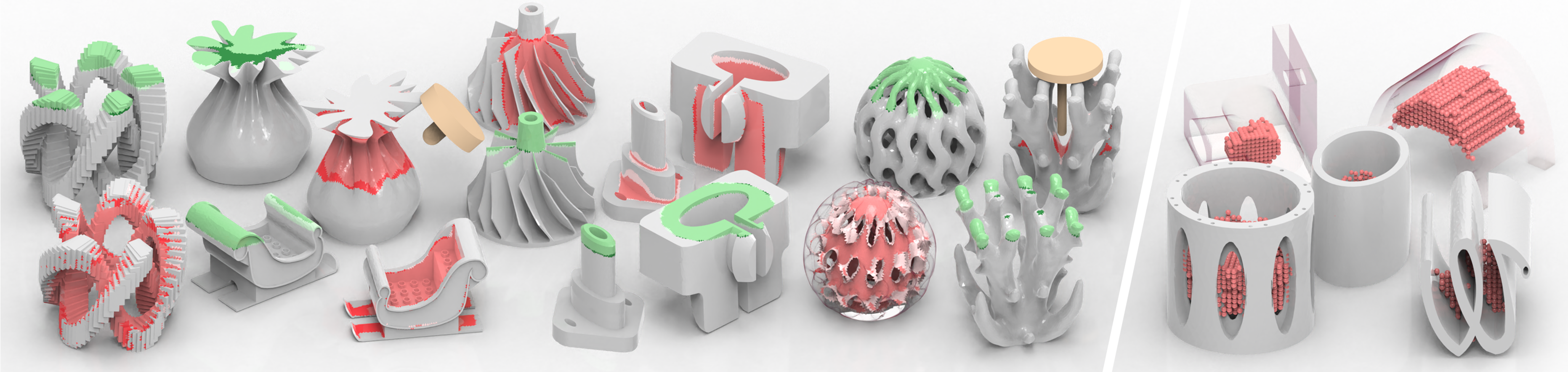

Fig. 1. We propose an octree-based neural network for cutter accessibility and severe occlusion detection on arbitrary meshes. Compared to traditional geometric methods, our network significantly reduces computation time, enabling real-time prediction during shape editing. Across various CAD and freeform model datasets with different mesh resolutions, the accuracy for inaccessible regions and occlusion regions reach 94.7% and 88.7%, respectively.

Abstract

Manufacturability is vital for product design and production, with accessibility being a key element, especially in subtractive manufacturing. Traditional methods for geometric accessibility analysis are time-consuming and struggle with scalability, while existing deep learning approaches in manufacturability analysis often neglect geometric challenges in accessibility and are limited to specific model types. In this paper, we introduce DeepMill, the first neural framework designed to accurately and efficiently predict inaccessible and occlusion regions under varying machining tool parameters, applicable to both CAD and freeform models. To address the challenges posed by cutter collisions and the lack of extensive training datasets, we construct a cutter-aware dual-head octree-based convolutional neural network (O-CNN) and generate an inaccessible and occlusion regions analysis dataset with a variety of cutter sizes for network training. Experiments demonstrate that DeepMill achieves 94.7% accuracy in predicting inaccessible regions and 88.7% accuracy in identifying occlusion regions, with an average processing time of 0.04 seconds for complex geometries. Based on the outcomes, DeepMill implicitly captures both local and global geometric features, as well as the complex interactions between cutters and intricate 3D models.

Video

DeepMill

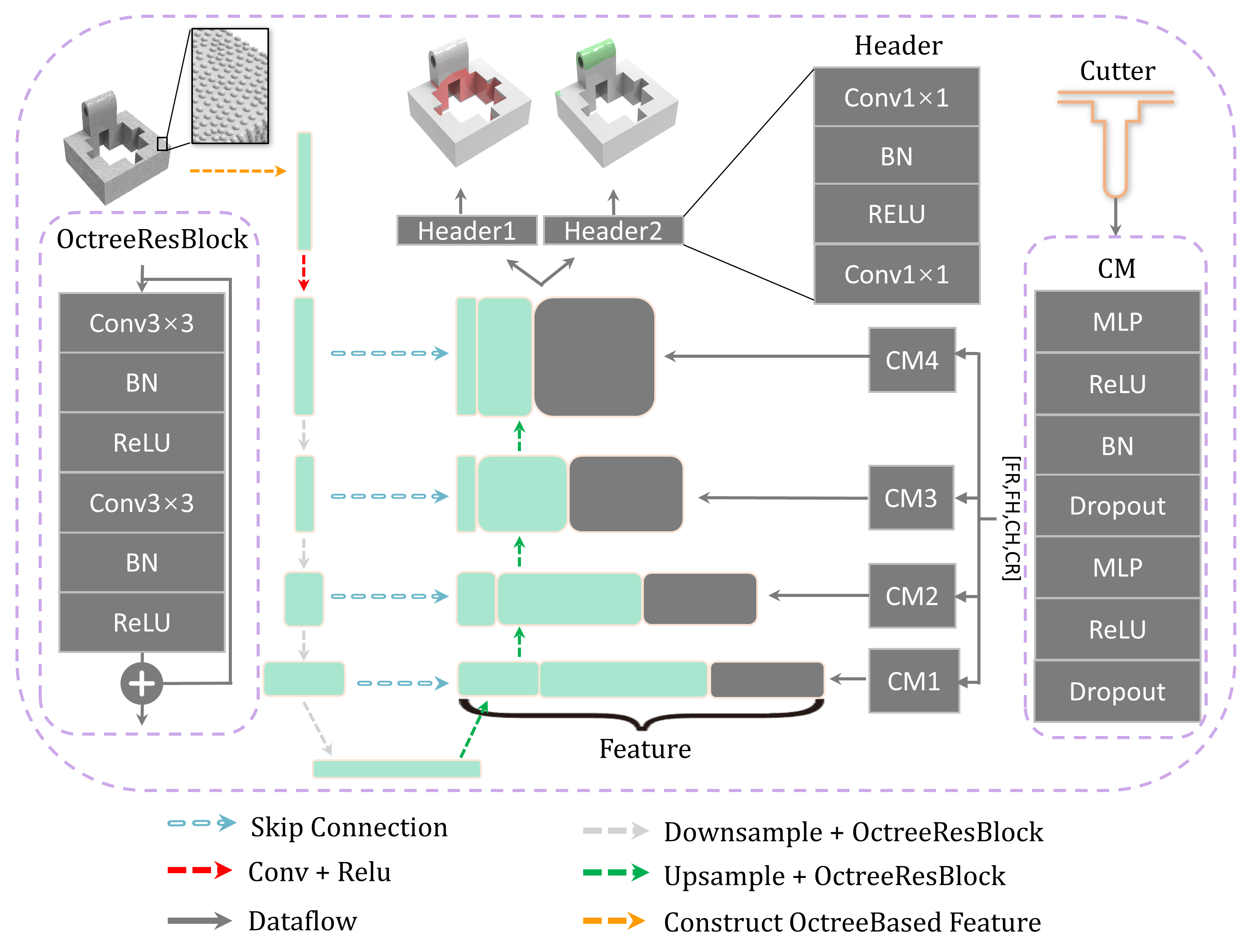

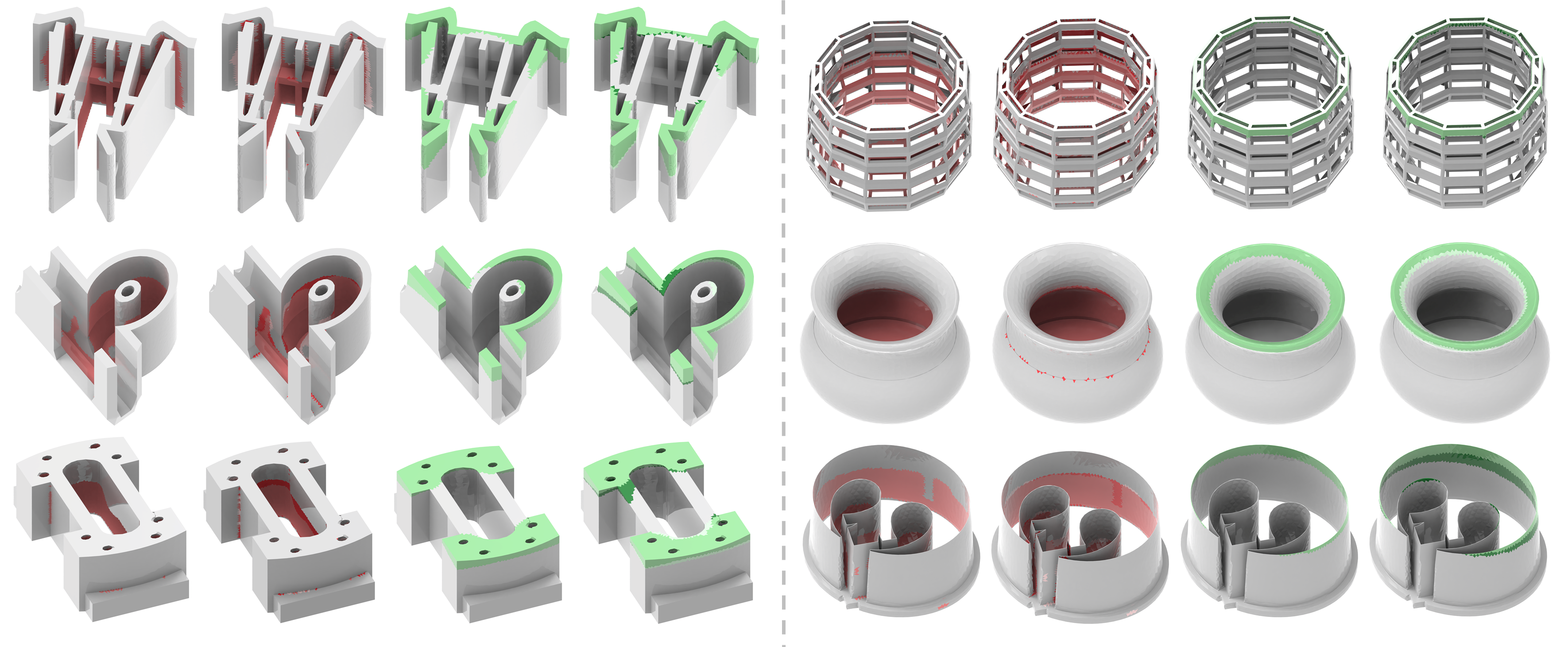

Fig. 2. DeepMill's network architecture. The input mesh is converted into a point cloud with normals, with each point corresponding to a Voronoi cell's site. Features are progressively extracted through the encoder, and the decoder, embedded with a cutter module (CM), restores spatial resolution. Both encoder and decoder are stacked with several Octree-based residual blocks. Finally, each site is subjected to dual-task binary segmentation through two header layers. Red and green represent the inaccessible and occlusion regions, respectively.

Results

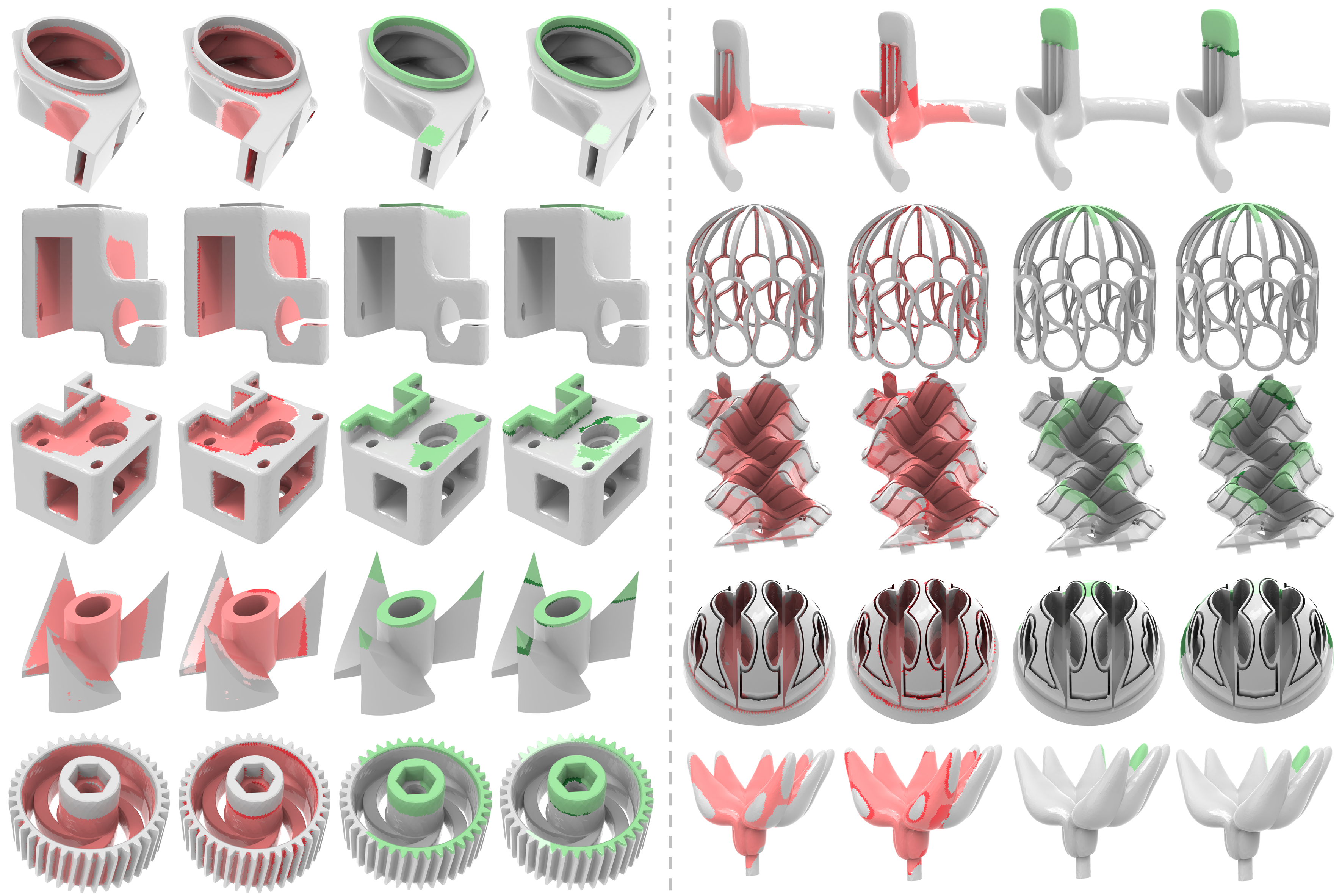

Fig. 3. The gallery of DeepMill prediction results. On the left are CAD shapes, and on the right are freeform shapes. The cutter size used for each shape is randomly generated. For each row of shapes, the first and third columns show the inaccessible and occlusion regions predicted by DeepMill. In the second and fourth columns, darker shades represent under-predicted areas, while lighter shades indicate over-predicted areas.

Fig. 4. Demonstration of DeepMill prediction results with extreme size of cutter. After adding the dataset generated with extreme cutters to the training set, DeepMill was able to extrapolate its prediction capability to cases involving extreme cutters.

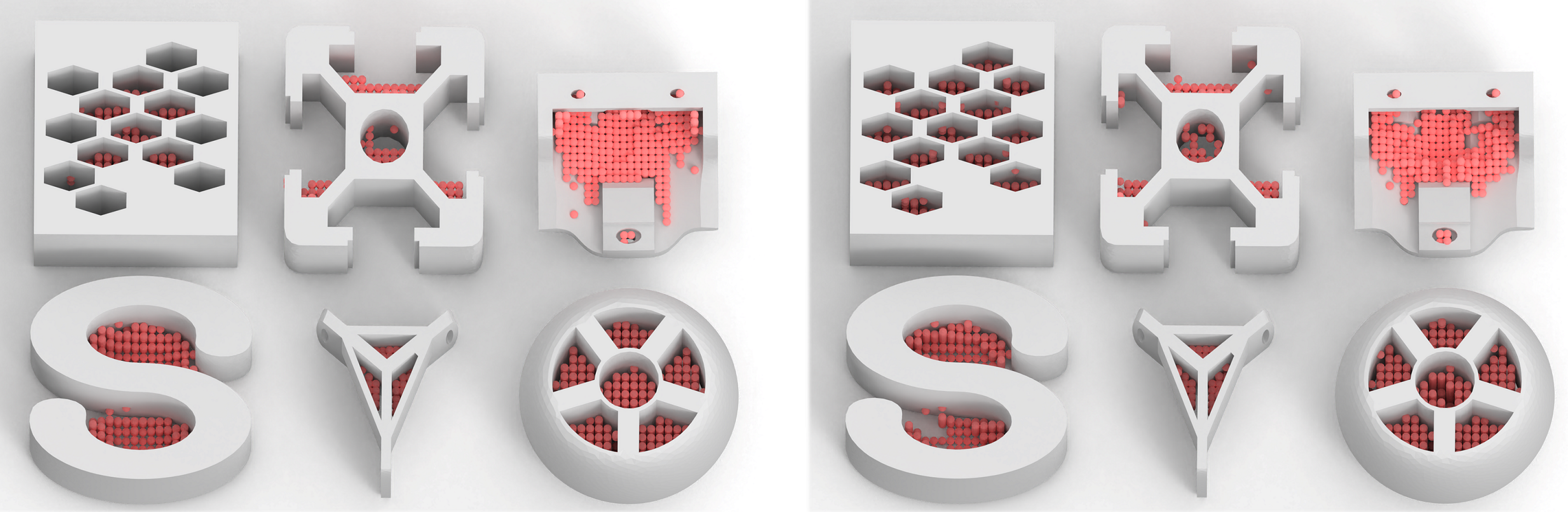

Fig. 5. Illustration of accessibility analysis within the volume. The red points represent inaccessible sampling points. On the top, the results predicted by DeepMill are shown, and on the bottom, the results obtained by the geometric method are displayed.

Downloads